AMD Price Forecast - AMD at $199 — EPYC Hits 40-50% Server Share, MI455X Targets 432GB HBM4

AMD (NASDAQ: AMD) rises 1.67% on a red market day as Nvidia GTC validates trillion-dollar AI infrastructure demand, EPYC approaches majority server share | That's TradingNEWS

AMD (NASDAQ: AMD) at $199.58 — GTC 2026 Confirmed the $1 Trillion AI Opportunity, EPYC Is Taking 40-50% Server Share, and the MI455X Memory Advantage Is the Trade Nobody Has Fully Priced

Advanced Micro Devices (NASDAQ: AMD) is trading at $199.58 Wednesday — up 1.67%, or $3.27 on the session — against a backdrop of broad market weakness driven by the Iran escalation and a hotter-than-expected February PPI print. The fact that AMD is green on a day when the S&P 500 is down 0.57% is not an accident. It is a direct function of the Nvidia GTC 2026 conference having confirmed a trillion-dollar AI infrastructure build-out that creates structural demand not just for the market leader but for every credible scaled alternative — and AMD is the only alternative that qualifies at hyperscaler scale. The stock sits within a 52-week range of $76.48 to $267.08, is trading at a P/E ratio of 76.56, carries a market cap of $325.75 billion, and has an average daily volume of 38.94 million shares. The day range on Wednesday is $195.75 to $200.75 — a tight $5 band that reflects a stock consolidating at a critical $200 level while the broader market sells off around it. That relative strength, on a macro-negative day, is the first signal that institutional money is treating the current $195-to-$200 zone as an accumulation range rather than a distribution zone.

Why GTC 2026 Was Paradoxically the Most Bullish Event of the Year for AMD

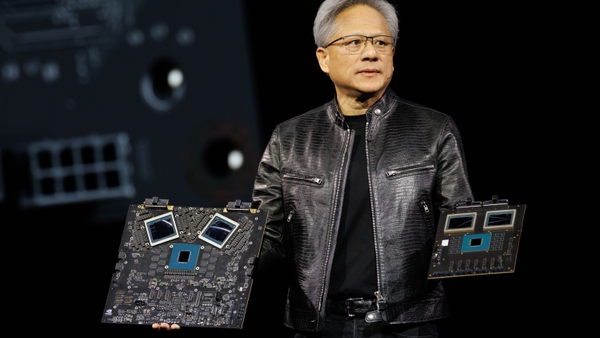

Jensen Huang's GTC 2026 keynote announced a path toward a trillion-dollar AI infrastructure empire — and the market's immediate instinct was to interpret this as a Nvidia (NVDA) story. That interpretation misses the more important second-order implication. A trillion-dollar infrastructure build-out is not a problem that any single company can solve. It creates structural demand for the entire ecosystem, and it specifically creates demand for a credible alternative to a vendor that is simultaneously expanding its addressable market, raising prices, and deepening its proprietary ecosystem lock-in. Every dollar of infrastructure spend that Nvidia cannot supply — and the supply constraint is real, with waiting lists extending months across the hyperscaler community — is a dollar that flows to AMD.

The specific Nvidia announcements that matter most for AMD's competitive positioning were not the ones that received the most headline attention. The Vera CPU launch — Nvidia's ARM-based custom processor designed specifically for AI workloads — is the announcement that defines the strategic landscape most clearly. Nvidia is trying to become a full-stack AI infrastructure provider: GPU, CPU, networking, software, all integrated into a proprietary system. This is a rational expansion of Nvidia's business model. It is also a strategy that forces every hyperscaler to make a binary choice: buy into the Nvidia walled garden completely, or find an alternative that provides comparable performance without the vendor lock-in. AMD is the alternative. The Vera CPU accelerates that dynamic rather than threatening it, because hyperscalers — Meta, Google, Microsoft, Amazon — have a deeply embedded institutional aversion to single-vendor dependency, particularly from a vendor that is simultaneously their supplier and their competitor in AI services.

AMD's Helios rack architecture, built on open standards and supported by the ROCm software ecosystem, gives its clients full control over their AI infrastructure stack. The x86 compatibility of EPYC CPUs means enterprise customers can integrate new AMD silicon without rewriting code or migrating off existing server architecture. This is not a minor convenience — it is a total cost of ownership advantage that directly drives purchasing decisions at the CFO level, not just the engineering level. When Nvidia pushes further toward vertical integration with Vera, the customers who decide they don't want to hand their entire AI infrastructure stack to a single vendor call AMD. That is not a theoretical outcome. It is the current operating reality confirmed by the Meta deal, the Samsung memory partnership, and the Celestica Helios rack-scale platform announcement.

EPYC's 40-50% Server Market Share: The Compounding Engine That Wall Street Treats as a Footnote

Advanced Micro Devices (NASDAQ: AMD) is approaching 40% to 50% share in the server CPU market — a statistic that receives almost no attention in mainstream coverage of the stock because the GPU narrative dominates every conversation. That is a mistake. EPYC's server market share gain is not a footnote — it is a structural event that has decades of compounding implications and that creates a flywheel effect for the GPU business that nobody is modeling correctly.

Here is why EPYC's CPU share matters for the GPU story: every server built on an EPYC CPU is a server that is already operating in the AMD ecosystem — already running AMD drivers, already familiar with ROCm, already optimized for AMD's software stack. When that server needs GPU acceleration added — for inference, for training, for agentic AI workloads — the path of least resistance is to add an Instinct GPU to an existing EPYC-based system rather than introducing an entirely new vendor into an established infrastructure environment. EPYC's 40-to-50% server market share is AMD's installed base for GPU upsell. Intel (INTC) has spent years trying to defend its server CPU position and continues to lose ground. AMD's Zen 6 EPYC "Venice," shipping later in 2026, is expected to deliver improved performance and efficiency gains targeting high-volume inference and agentic workflows — extending the share trajectory that has brought EPYC from a niche challenger to a near-majority market position.

CEO Lisa Su's statement that server CPU TAM is expected to grow in "strong double-digits" in 2026 deserves to be taken at face value — and potentially exceeded. The agentic AI inflection creates a specific category of demand for CPU-based compute that hasn't fully materialized in analyst models yet. Agentic AI workflows — the multi-step reasoning and autonomous task completion systems that are now deploying at scale in enterprise environments — are frequently "serial" in their computational structure: send a query, wait for a response, process the result, send the next query. This logic-heavy, sequential processing is what CPUs are specifically optimized for. GPUs excel at parallel processing — running thousands of simultaneous operations. But when an AI agent is managing a CRM workflow, querying an ERP database, or coordinating between APIs, it is doing serial work that scales efficiently on CPUs. The agentic AI inflection is a CPU demand story as much as a GPU demand story, and AMD's EPYC leadership positions the company at the center of both dimensions.

The AMD-Nutanix (NTNX) Partnership: $250 Million for the Agentic AI Enterprise Stack

AMD (NASDAQ: AMD) announced a strategic partnership with Nutanix (NTNX) in late February — investing up to $250 million, starting with $150 million at the commencement of the partnership and up to an additional $100 million to support joint engineering initiatives — to co-develop a full-stack agentic AI platform optimized for enterprise deployment. The joint platform will integrate AMD EPYC CPUs, AMD Instinct GPUs, the ROCm software ecosystem, and the AMD Enterprise AI platform into Nutanix's Cloud and Kubernetes Platforms, creating an open-source, full-stack solution for agentic AI deployment across enterprise environments.

The timing of this partnership is precise. Industry survey data shows agentic AI adoption nearing a 50% penetration rate in enterprise environments — with adoption expanding rapidly across corporate functions including finance, legal, customer service, and operations. More than 85% of surveyed respondents have indicated the mission-critical role of open-source technology in their enterprise agentic AI strategies, specifically to optimize compatibility with existing technology stacks and reduce deployment complexity. That 85% preference for open-source in enterprise agentic AI deployment is a direct validation of AMD's strategic positioning versus Nvidia's proprietary vertical integration approach. Enterprise IT departments do not want to be locked into closed ecosystems — they want solutions that integrate with their existing SAP, Salesforce, Oracle, and ServiceNow environments without requiring a wholesale infrastructure replacement.

The Nutanix partnership is the execution vehicle for capturing that 85% open-source preference in the agentic AI enterprise market. The first jointly developed agentic AI platform is expected to arrive in late 2026, which aligns precisely with the agentic AI inflection that Nvidia's own Jensen Huang acknowledged in the Q4 FY 2026 earnings call — noting that "agentic AI has turned an inflection point, and it literally happened in the last couple of 2, 3 months." When the largest GPU manufacturer in the world acknowledges an inflection in the very market segment that your partnership is designed to capture, the timing of the AMD-Nutanix deal looks prescient rather than opportunistic.

The MI455X Memory Advantage: 432GB of HBM4 and Why This Beats Nvidia's Rubin on the Metric That Actually Matters

The most technically significant competitive development in the AMD GPU roadmap — and the one that has the greatest potential to shift market share in the near term — is the MI455X GPU's rumored 432GB of HBM4 memory. Nvidia's Vera Rubin, by comparison, is reported to offer less high-bandwidth memory capacity. This differential is not a minor specification gap — it directly addresses what Jensen Huang himself identified at GTC as the most critical limiting factor in AI advancement: memory is now the bottleneck, not compute.

The logic is straightforward. Agentic AI systems require persistent context, large model states, and real-time reasoning. Running a large language model at inference scale means keeping the model weights, the KV cache, and the active context all in memory simultaneously. The larger the memory capacity per GPU, the larger the model that can run without memory-induced latency from constant data movement between GPU memory and system memory. If AMD's MI455X can run larger models on fewer GPUs than Nvidia's comparable offering, it delivers materially higher performance per watt and performance per dollar — which in an inference workload environment, where cost efficiency is the primary purchasing criterion rather than raw peak performance, is the winning metric.

The MI400 Series (including MI450) launches later in 2026 as the near-term catalyst, with MI455X following as the memory-centric competitive response to Nvidia's Rubin. The roadmap progression — from the current Instinct positioning to MI450, then MI455X — represents the architectural evolution from being a GPU competitor to being a memory-advantage platform. That transition is the most important product story AMD has told in years, and the market has not yet fully priced the competitive implications of a GPU that outperforms Nvidia's flagship on the specific metric that Nvidia's own CEO has identified as the industry's binding constraint.

Read More

-

JEPQ ETF Forecast: JEPQ at $59 With 12.70% SEC Yield as $37B Covered Call Strategy Cements Lead Over JEPI

13.05.2026 · TradingNEWS ArchiveStocks

-

XRP ETF Inflows Hit $1.35B All-Time High as XRPI Trades at $7.94 and XRPR at $11.63 Heading Into May

13.05.2026 · TradingNEWS ArchiveCrypto

-

Natural Gas Futures Price Forecast: June Futures Settle at $2.864 as Hammerfest LNG Outage and Iran Stalemate Test the $2.953 50-Day Wall

13.05.2026 · TradingNEWS ArchiveCommodities

-

USD/JPY Price Forecast: Pair Climbs Toward 158 for Third Straight Session as Hot PPI Reignites Fed Hike Bets

13.05.2026 · TradingNEWS ArchiveForex

Pensando Networking: Pollara 400, Vulcano 800Gbps, and the Tokens-Per-Watt Bottleneck

AMD (NASDAQ: AMD)'s Pensando networking roadmap is the least-discussed component of the bull case and arguably the most structurally important for long-term competitive positioning at rack scale. As AI clusters scale from tens of thousands of GPUs to hundreds of thousands and approaching millions, networking becomes the critical constraint — not just compute or memory. Cross-server communication latency and bandwidth directly determine whether a massive GPU cluster achieves its theoretical performance ceiling or falls short due to network-induced idle time.

The Pensando Pollara 400 AI NIC operates at speeds up to 400 gigabits per second and features a programmable P4 engine that allows customers to adapt the component as open standards like the Ultra Ethernet Consortium evolve. It also carries an OCP 3.0 compliant variant for seamless interoperability across industry-standard deployments. The next-generation Pensando Vulcano AI NIC advances to 800Gbps network throughput — delivering 8x the scale-out bandwidth per GPU compared to its predecessor — which directly addresses the cross-server communication bottleneck that emerges when linking hundreds of thousands of GPUs into a single logical cluster. The Pensando Salina 400 DPU — the company's third-generation data processing unit — delivers up to 2x better performance and scale than its predecessor and competes directly against Nvidia's BlueField-4 DPU for networking and storage offloading in high-volume agentic AI token processing environments.

The Pensando roadmap positions AMD to compete not just at the component level — GPU versus GPU, CPU versus CPU — but at the rack-scale system level, where the total solution encompasses compute, memory, and networking in an integrated architecture. This is significant because Nvidia's Vera Rubin strategy is explicitly a system-level competition: Nvidia is building a complete rack-scale solution where every component — the GPU, the CPU, the NIC, the NVLink interconnect — is proprietary and optimized to work together. If AMD can deliver competitive rack-scale performance through open standards — Helios rack architecture, Pensando networking, EPYC CPUs, Instinct GPUs, ROCm software — it captures the enterprise and hyperscaler demand that specifically wants to avoid the Nvidia system-level lock-in.

The Meta Deal: Why Hyperscaler Commitment to AMD Capacity Requirements Is the Most Important Data Point

The Meta (META) agreement with AMD (NASDAQ: AMD) — which involves significant capacity commitments stretching years into the future — is being read by most market participants as a large number with a complex structure involving warrants. The more important interpretation is simpler: hyperscalers with Meta's scale and technical sophistication do not commit to multi-year capacity agreements unless they have high conviction that the supplier will deliver. The specific financial structure — including warrants — creates aligned incentives between Meta and AMD in a way that pure purchase agreements do not. Meta's warrant position means that Meta benefits directly from AMD's stock appreciation, which means Meta has a financial interest in AMD executing against its roadmap, which means Meta will actively support AMD's technical development in ways that go beyond a normal supplier relationship.

The Meta deal also sends a clear signal to every other hyperscaler: AMD has achieved the credibility threshold required for large-scale, long-duration infrastructure commitment. This is not the same as winning a pilot program or getting a test deployment. Multi-year capacity commitments at hyperscaler scale are the equivalent of a permanent seat at the table in the AI infrastructure supply chain. AMD now has that seat. The question is not whether the company belongs in the AI infrastructure conversation — that question is settled. The question is how quickly the financial results reflect the operational reality that EPYC is the server CPU of choice at the modern data center, Instinct GPUs are deployed at hyperscaler scale, and ROCm has achieved sufficient ecosystem maturity to support production workloads.

The Samsung memory partnership announced alongside the Celestica-Helios rack-scale platform collaboration further validates that AMD's ecosystem is maturing in the right direction. Samsung's involvement in AMD AI memory development is a direct competitive response to the SK Hynix-Nvidia HBM relationship — and AMD partnering with Samsung for HBM4 development ensures it has a credible memory supply chain for the MI455X's 432GB ambition that doesn't depend on Nvidia's preferred supplier.

29x Forward Earnings at $199: The Valuation Case for $60-80 Billion in Revenue

AMD (NASDAQ: AMD) trades at approximately 29x forward earnings — a multiple that looks expensive in absolute terms until you run the revenue trajectory. The valuation framework that most directly challenges the current multiple is the path to $60-to-$80 billion in revenue. At 29x forward earnings on a company with AMD's growth rate, the question is not whether the multiple is high — it is whether the earnings denominator is growing fast enough to compress the multiple through earnings expansion rather than multiple contraction. The answer, on the current trajectory, is yes.

The specific modeling framework: the upside scenario projects a five-year revenue CAGR of 42% through 2030, with earnings growing at a 49% CAGR over the same period. The base case uses 41% revenue CAGR and 48% earnings CAGR. The terminal value uses a 3.5% perpetual growth rate on 2030 EBITDA with a 9.5% discount rate. Running that framework produces an upside scenario intrinsic value near $260 per share — representing approximately 30% upside from Wednesday's $199.58 price. The base case intrinsic value falls below that but still implies substantial upside from current levels.

The more important point than the specific price target is the multiple compression dynamic. If AMD executes the MI450 ramp in H2 2026, continues EPYC's CPU market share trajectory, and captures incremental agentic AI demand through the Nutanix partnership and the Pensando networking buildout, earnings grow faster than the multiple. At 29x forward earnings today, if earnings growth is 48%-to-49% annually, the forward multiple compresses toward 20x within two years — without the stock moving at all. That means the stock is cheap not on an absolute basis but on a growth-adjusted basis, and the market doesn't need to expand the multiple for the bull case to work. The earnings growth does the work alone.

The 52-week range tells the full story of the valuation journey: from $76.48 to $267.08. AMD at $199.58 is 63% above its 52-week low and 25% below its 52-week high. The company at $199 is not the same company it was at $76 — the MI400 Series is approaching launch, the Nutanix partnership is signed, the Helios rack-scale platform is in development with Celestica, the Meta deal is locked, and the EPYC server share is approaching 40-50%. The stock is cheaper on a forward earnings basis than it was at both those extremes of the 52-week range.

The Inference Wave Is Structural, Not Cyclical — And AMD's Architecture Is Built for It

The shift from training-dominant AI workloads to inference-dominant workloads is the most consequential structural transition in the AI semiconductor market since the initial GPU adoption wave. Training workloads are intensive, capital-heavy, and concentrated among a small number of frontier model developers — OpenAI, Anthropic, Google DeepMind, Meta AI. Inference workloads are distributed, continuous, and owned by every enterprise that deploys an AI system to serve users at scale. The inference market is orders of magnitude larger in potential compute demand than the training market, and it has fundamentally different economics: cost per token, not raw peak performance, is the governing metric.

AMD's product roadmap — MI450 then MI455X — is specifically optimized for inference. The MI455X's rumored 432GB HBM4 memory position is an inference optimization, not a training optimization. Running large models at inference scale requires keeping more of the model in memory to avoid latency from memory swapping. More memory per GPU means larger models running at lower latency for the same number of GPUs. In inference deployments, where the cost per query directly determines the unit economics of an AI service, that efficiency advantage translates directly into customer preference.

The ROCm software ecosystem has crossed the threshold from "technically adequate" to "deployed at hyperscalers in production" — a transition that took years and is now largely complete. The ecosystem maturity question has been the primary objection to AMD GPU adoption for the past three years. That objection is now largely answered. Hyperscalers with Meta's technical depth are not running AMD Instinct GPUs in production unless the software ecosystem can support it reliably. The production deployment is the proof point that no benchmark comparison or roadmap announcement can substitute for.

Execution Risk: The Roadmap Dependency on H2 2026 Is Real and Cannot Be Dismissed

AMD (NASDAQ: AMD) at $199 is a Buy — but the execution risk embedded in the H2 2026 roadmap is a genuine consideration that should inform position sizing rather than thesis rejection. The bull case depends heavily on the MI450 ramp and the Helios rack-scale platform launch landing on schedule in the second half of 2026. Any delay in MI450 or Helios delivery would not just slow revenue recognition — it would raise questions about AMD's ability to maintain its competitive positioning against a Nvidia that is simultaneously launching Vera Rubin with its own memory and CPU advancements.

ROCm ecosystem stickiness remains a secondary risk. The software ecosystem has improved dramatically and is now deployed at production hyperscaler scale — but it remains more dependent on hyperscaler support than Nvidia's CUDA ecosystem, which has 15 years of developer ecosystem development behind it. If any major hyperscaler reduces its ROCm investment or redirects development resources to CUDA, the ecosystem momentum AMD has built over the past two years could stall. That scenario appears unlikely given the Meta deal and the Samsung partnership — both of which represent multi-year commitments that would be expensive to unwind — but it is a scenario that warrants monitoring through quarterly earnings calls and hyperscaler capital expenditure guidance.

Networking is the third execution risk. At rack scale, connectivity is a critical performance determinant, and if AMD's Pensando roadmap falls behind Nvidia's NVLink and InfiniBand networking solutions in meaningful ways, AMD can compete at the component level but may fall short at the system level where hyperscalers increasingly make purchasing decisions. The Pensando Vulcano 800Gbps NIC and the Salina 400 DPU are the specific products that need to deliver competitive performance against Nvidia's networking stack to prevent this gap from widening.

The insider transaction history for AMD is worth examining in this context — executive buying or selling behavior relative to the MI450 development timeline provides an important signal about management's internal confidence in H2 2026 delivery. The full stock profile provides the comprehensive context for understanding how institutional positioning is evolving alongside the product roadmap.

Wall Street Ratings, Quant Score, and the SA Consensus: All Three Are Buy or Better

The rating picture for AMD (NASDAQ: AMD) is unusually consistent across methodologies. SA Analysts rate the stock Buy with a score of 4.11. Wall Street rates it Buy with a score of 4.42. The Quant model rates it Strong Buy with a score of 4.67 — the highest tier. Three independent rating methodologies, using different data sources and analytical frameworks, arriving at the same directional conclusion is not noise. It is a convergent signal about a business that is executing on multiple dimensions simultaneously — EPYC share, Instinct GPU deployment, ROCm ecosystem, Pensando networking — in ways that are visible in multiple data streams simultaneously.

The SA Analysts Buy rating is the most conservatively derived of the three, requiring human analysis to corroborate what both the quantitative model and Wall Street consensus are showing. The quant Strong Buy at 4.67 — the highest score available — reflects the compound momentum across the quantitative metrics: revenue growth, earnings growth, margin trajectory, and price momentum. AMD's revenue grew year-over-year, the data center segment is the primary growth driver, and the earnings growth trajectory is outpacing multiple expansion expectations in a way that creates the valuation compression dynamic described above.

The Verdict on AMD (NASDAQ: AMD): Buy at $199 With Conviction, Scale on Weakness Toward $190

Advanced Micro Devices (NASDAQ: AMD) at $199.58 is a Buy — with specific conviction built on numbers rather than narrative. EPYC approaching 40-to-50% server market share is a structural event that Wall Street is treating as a footnote. The MI455X's rumored 432GB HBM4 memory advantage over Nvidia's Rubin directly addresses the memory bottleneck that Jensen Huang himself identified as the industry's primary constraint. The Meta multi-year capacity commitment is the hyperscaler validation that removes the "credibility" objection from the bear case. The Nutanix $250 million partnership positions AMD at the center of the enterprise agentic AI infrastructure market — a segment where 85% of enterprises prefer open-source solutions, which is precisely what AMD provides. The Pensando Pollara 400 NIC, Vulcano 800Gbps NIC, and Salina 400 DPU address the networking bottleneck at rack scale, competing directly against Nvidia's BlueField-4 for the tokens-per-watt efficiency market that is growing with every new generation of massive AI cluster.

At 29x forward earnings against a 42%-to-49% earnings CAGR, the stock is inexpensive on a growth-adjusted basis. The path to $60-to-$80 billion in revenue compresses the forward multiple through earnings growth without requiring any multiple expansion. The upside intrinsic value scenario of $260 per share implies 30% upside from $199. The 52-week low of $76.48 is the permanent marker of how deeply the market mispriced AMD before the AI infrastructure demand reality became undeniable. At $199, the market has partially corrected that mispricing but has not yet fully priced the convergence of EPYC CPU leadership, Instinct GPU hyperscaler deployment, MI455X memory advantage, Pensando networking buildout, and the agentic AI enterprise stack through Nutanix. Each of those is a separate revenue growth driver. Together, they are a compounding engine operating simultaneously across multiple product categories — and that compound growth is what 29x forward earnings is buying at Wednesday's price. The trade is: Buy at $199, scale aggressively into any weakness toward $190, and hold with conviction through the MI450 ramp in H2 2026.