AMD Stock Price Forecast: 44% PEG Discount, Samsung HBM4 at 3.3 TB/s - Why $350 Is the Real Target

AMD at $202.62 trades at 6.9x EV/Sales versus Nvidia's 11.2x as the $9.8B Q1 guide, MI455X's 432GB HBM4 advantage | That's TradingNEWS

Advanced Micro Devices (AMD) at $202.62 — Why the Private Credit Collapse, Samsung's HBM4 Partnership, and Nvidia's Vertical Integration Trap Are All Converging Into AMD's Most Important Year

Advanced Micro Devices (NASDAQ: AMD) is trading at $202.62 on Monday, March 23, 2026 — down approximately 1% over the past month and sitting roughly 8% in the red year-to-date, a performance that looks disappointing on the surface but tells an entirely incomplete story when you examine what is actually happening beneath the price action. The stock peaked at $264.33 over the past 52 weeks and traded as low as $78.21 in the prior year — a range that captures the full volatility of a company in genuine transition from chip supplier to AI infrastructure platform. The Seeking Alpha Quant rating sits at Strong Buy with a score of 4.68. Wall Street consensus is Buy at 4.42. SA Analysts rate it Buy at 4.15. Every major analytical framework points in the same direction. Yet the stock is 8% lower on the year while the AI narrative has been dominating every financial media outlet on the planet. That disconnect is not a red flag — it is the opportunity. The market is systematically mispricing what AMD is becoming, and the convergence of three simultaneously developing catalysts — the private credit bubble deflating, the Samsung HBM4 MOU signed March 18, and Nvidia's increasingly antagonistic vertical integration strategy unveiled at GTC 2026 — is creating the setup for one of the most compelling asymmetric risk-reward situations in the semiconductor sector heading into the second half of 2026.

AMD at $202.62 Trades at a 44% Discount to Sector Median PEG — The Math Points to $350

Start with the valuation, because the numbers are stark and the market's current pricing makes no logical sense when examined against the growth trajectory. AMD trades at a forward Non-GAAP PEG ratio of 0.70 against a sector median of 1.27 — a 44% discount to peers on a growth-adjusted basis that implies the market is fundamentally skeptical about whether the earnings growth AMD is projecting will actually materialize. A forward PEG below 1.0 is the market's way of saying it does not believe the earnings growth story. A PEG of 0.70 versus a sector median of 1.27 is the market pricing in a 44% haircut to that story's credibility. If AMD is re-rated to the sector median PEG of 1.27 — a level that simply requires the market to believe AMD's growth trajectory is as credible as the average semiconductor company — the implied share price is approximately $350, representing greater than 81% upside from the current $202.62. The current forward earnings multiple sits at approximately 29x 2026 consensus EPS — a number that looks aggressive until you recognize that AMD is growing earnings at a 40%+ CAGR. A 29x multiple on 40%+ EPS growth is not expensive — it's cheap. For comparison, when Nvidia (NVDA) was in the equivalent position before the ChatGPT boom in late 2022, its PEG ratio was 0.5 — even more discounted than AMD is today — and what followed was one of the greatest single-stock runs in market history. The parallel is imperfect but instructive: the market systematically undervalues semiconductor companies at inflection points, and AMD at $202.62 with a 0.70 PEG and 40%+ EPS growth is exhibiting the classic characteristics of exactly that setup.

Q1 2026 Revenue Guided at $9.8 Billion — The China Factor and What It Actually Means

AMD guided Q1 2026 revenue to approximately $9.8 billion, a figure that includes roughly $100 million in China sales following the export control environment that has been reshaping the addressable market for U.S. chip companies. In Q4 2025, AMD generated approximately $390 million in China revenue, and the Q1 guidance reflects the continuation of a normalized China exposure that is meaningful but not the dominant driver of the growth story. More important than the China revenue figure is what Q4 told us about the underlying business structure. Q4 included approximately $360 million in reserve reversal — an accounting item that technically inflated the reported quarter — but stripping that out reveals a stable CPU demand picture and resilient GPU sales that confirm the thesis is not dependent on one-time items or accounting adjustments. The data center business is growing and offsetting seasonal weakness in the client and gaming segments, which is exactly the revenue mix evolution that bulls have been waiting to see confirmed in reported financials. Eight out of ten of the top ten hyperscalers globally have AMD's Instinct GPUs in production. EPYC cloud instances grew over 50% in 2025, reaching approximately 1,600 deployed instances. These are not speculative forward projections — they are confirmed reported metrics that establish AMD as a legitimate, scaled participant in enterprise AI infrastructure rather than a challenger still fighting for relevance.

The Samsung HBM4 MOU: Why March 18 Was the Most Important Date in AMD's 2026 Calendar

13 Gbps, 3.3 TB/s Bandwidth — The Memory Architecture That Changes Everything for MI455X

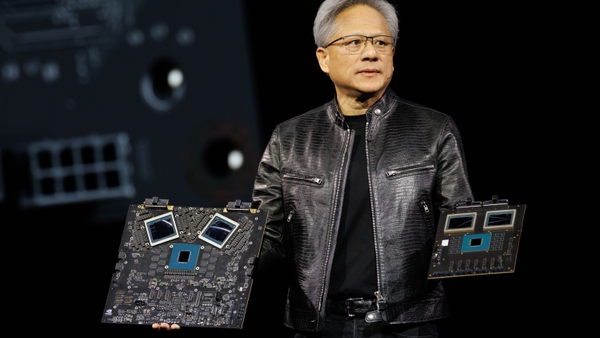

The most significant development for AMD in the past several weeks received far less market attention than it deserved. On March 18, 2026, AMD and Samsung (SSNLF) signed a Memorandum of Understanding that makes Samsung the primary HBM4 supplier for the MI455X GPU, establishes Samsung as a DDR5 partner for the 6th Generation EPYC Venice processor, and opens discussions for foundry services. Samsung's HBM4 technology is described as mass production ready, with speeds up to 13 Gbps and bandwidth of 3.3 TB/s. The headline from Nvidia's GTC conference that everyone was focused on was Jensen Huang's vision for a trillion-dollar AI infrastructure empire. The headline they missed was AMD solving its supply chain risk before its most important product launch. The MI455X is rumored to feature 432GB of HBM4 memory — a figure that is significantly higher than what Nvidia's Rubin architecture is expected to offer. Memory capacity is not a secondary specification in the 2026 AI infrastructure market — it is the primary bottleneck. Jensen Huang himself identified memory as the most critical limiting factor in AI advancement for 2025 and 2026. Agentic AI systems require persistent context, large model states, and real-time reasoning across complex multi-step workflows — all of which are memory-bandwidth constrained, not compute-constrained. If AMD can run larger models on fewer GPUs because of superior HBM4 capacity, it delivers dramatically higher performance per watt and per dollar — the two metrics that matter most in a world where hyperscalers are being forced by private credit tightening to operate with cost discipline they have not exercised in the past three years.

From Accelerators to Full Rack Economics: Helios and Venice Define AMD's Platform Play

The conversation about AMD has been stuck in the wrong frame for two years. Analysts have been asking whether AMD's MI300 and MI350 GPUs can compete with Nvidia on benchmark performance — a question that completely misses where the actual competitive battle is moving. The benchmark war is over. The platform war is just beginning. AMD has explicitly guided that 2026 is an inflection point where MI450 revenue begins in Q3 and ramps substantially in Q4, with the critical detail being that the vast majority of 2026 MI450 revenue comes from RackScale solutions rather than standalone accelerators. This is not a semantic distinction — it is a fundamental shift in how AMD is monetizing its AI technology. Selling a GPU is a one-time transaction with a defined ASP and margin structure. Selling a RackScale solution — where AMD's GPUs, CPUs, memory architecture, networking, and software stack are integrated into a complete deliverable — is a platform sale with higher ASPs, better margins, longer customer relationships, and significantly higher switching costs. The Helios rack architecture, built on open standards and supported by the ROCm software ecosystem, gives enterprise customers complete control over their AI infrastructure without locking them into a proprietary stack. CEO Lisa Su specifically called out "extremely high customer pull" for Venice — the 6th Gen EPYC CPU platform — with large-scale cloud engagement already underway. EPYC's growth within the AI solution is not a sideshow to the GPU story. In the inference era, CPU orchestration, preprocessing, storage-centric workloads, and agent-based workflows that spawn into traditional compute are all CPU-bound. AMD with dominant EPYC share plus growing Instinct GPU share plus Helios RackScale architecture is a fundamentally different business than AMD the GPU challenger of 2023.

The Private Credit Bubble: Why $1.8 Trillion in Strain Is Paradoxically Bullish for AMD

Default Rates Above 9%, CoreWeave Stalled, Blue Owl Struggling — The GPU Financing Model Is Breaking

The $1.8 trillion private credit sector that has been the primary financing engine for hyperscaler data center expansion is showing unmistakable signs of stress, with default rates now estimated by some analysts to exceed 9%. The specific manifestation that matters most for the AMD thesis is what is happening to CoreWeave — one of the largest GPU-focused hyperscalers — whose expansion plans have been stalled because its primary credit backer Blue Owl has struggled to raise the necessary capital. When CoreWeave can't finance new GPU clusters, the downstream effect is not simply slower growth — it is a forced pivot in the entire economics of AI infrastructure spending. The projects that get cut first are the massive pre-training clusters with 10,000+ GPU configurations, because those require the largest capital outlays and have the longest depreciation timelines relative to the pace of model obsolescence. GPU depreciation timelines for Nvidia's high-end hardware are already being questioned by analysts like Michael Burry, who has publicly challenged whether many of these data center projects make genuine economic sense when the accounting depreciation schedules are stress-tested against actual hardware replacement cycles. Pre-training clusters that cost hundreds of millions of dollars and depreciate over 3-5 years but become technically obsolete in 12-18 months represent a fundamental mismatch between accounting reality and operational reality — and when private credit tightens, that mismatch gets exposed. What survives credit tightening is inference compute — smaller, more profitable, more capital-efficient deployments that generate revenue on a per-token basis and have been shown to deliver attractive gross margins to the foundation model companies operating them. Research from multiple independent sources has confirmed that most major foundation model companies are already profitable on a gross margin basis for post-training inference token production. That is the market AMD is specifically positioned to dominate.

The OpenAI and Meta Deals Signal the CUDA Monopoly Is Cracking — What Comes Next Is Warrant-Free

AMD's warrant-based partnership with OpenAI, announced in late 2025, was initially viewed with skepticism because of the dilutive structure — warrants are shareholder-unfriendly instruments that represent contingent equity dilution in exchange for commercial access. But the strategic logic behind the deal is becoming clearer in retrospect. Getting OpenAI to integrate AMD hardware into its production AI stack was not a commercial transaction — it was a reference customer acquisition that changes the risk calculus for every other CTO in the hyperscaler ecosystem. When OpenAI runs AMD GPUs, the risk premium that other enterprises attach to switching from Nvidia's CUDA ecosystem toward AMD's ROCm alternative drops substantially. The "what if it doesn't work" anxiety that has been Nvidia's most powerful competitive moat — not the technology itself but the fear of unknown ROCm compatibility issues — starts to dissolve when the world's most prominent AI company publicly validates AMD's hardware in production. In meetings with Wall Street, AMD management has explicitly signaled that future large deals — the gigawatt-scale data center contracts comparable to the OpenAI relationship — will not require warrants. The value of the OpenAI relationship was never the revenue; it was the permission structure it creates for other hyperscalers to follow without feeling like they are making an unvalidated bet on unproven technology. The Meta (META) engagement tells the same story. When Meta and OpenAI are both running AMD hardware, the CUDA lock-in argument loses its absolute character — it becomes a matter of cost-benefit analysis, not existential risk management. In a credit-constrained environment where cost matters more than it did in 2023, that shift in risk perception is enormously valuable.

Read More

-

Palantir Stock Price Forecast: PLTR 56% Revenue Growth, $2.27B Free Cash Flow and $376B Look Cheap

23.03.2026 · TradingNEWS ArchiveStocks

-

XRP Price Forecast: 700M XRP Support Clusters, and the $1.26 Line That Separates $1.91 Recovery From $1.12 XRP-USD Collapse

23.03.2026 · TradingNEWS ArchiveCrypto

-

Oil Price Forecast: WTI Crashes to $88.70 and Brent Hits $100 With IEA's $147 Warning

23.03.2026 · TradingNEWS ArchiveCommodities

-

GBP/USD Price Forecast: Four BoE Hikes, Iran Stagflation, and the $1.3430 Breakout

23.03.2026 · TradingNEWS ArchiveForex

Nvidia's GTC 2026 Vertical Integration Strategy: Why AMD Is the Direct Beneficiary

Vera CPU, Full-Stack Ambitions, and Why Hyperscalers Don't Want to Be Locked In

Nvidia's GTC 2026 presentation unveiled a vision for complete vertical integration across the AI infrastructure stack — from the Vera custom CPU to networking, storage, software orchestration, and full-rack solutions. Jensen Huang's ambition is to make Nvidia the single vendor for every layer of AI infrastructure, capturing the entire margin stack from silicon to software. This strategy is rational from Nvidia's perspective — it expands the total addressable market and creates customer dependencies that are extraordinarily difficult to unwind once established. But it is creating exactly the dynamics that AMD needs to accelerate its market share gains. Hyperscalers — the Googles, Metas, Amazons, and Microsofts of the world — have spent decades building technology stacks precisely to avoid single-vendor dependencies. The nightmare scenario for a hyperscaler CTO is a world where one company controls the CPU, GPU, networking, software stack, and pricing across their entire AI infrastructure budget. Nvidia's vertical integration ambition is that nightmare scenario made concrete. Every time Nvidia expands into another layer of the stack, it creates fresh urgency among hyperscalers to develop alternative sourcing relationships. AMD's Helios architecture — built on open standards, compatible with existing x86 infrastructure, supporting seamless CPU-GPU integration without requiring a wholesale departure from established software ecosystems — is the direct alternative. Customers who deploy AMD retain architectural control. They can mix and match components, use their existing code bases without rewriting for a new ISA, and avoid the proprietary lock-in that Nvidia's Vera CPU introduces by pulling customers away from the x86 ecosystem into an ARM-based alternative that Nvidia controls exclusively.

432GB HBM4 vs Nvidia Rubin — AMD Wins the Memory War That Jensen Huang Said Matters Most

The MI455X's rumored 432GB of HBM4 memory deserves to be examined against Nvidia's competitive response, because this is where AMD has a genuine technical advantage that is currently being underappreciated by a market still focused on CUDA ecosystem dominance. Nvidia's next-generation architecture is not expected to match the MI455X's memory capacity — a gap that matters enormously in the inference-dominated workloads that will define the 2026-2027 AI infrastructure market. Larger HBM capacity means larger models can fit on a single GPU or a smaller cluster of GPUs, reducing the inter-GPU communication overhead that is one of the primary efficiency drains in large distributed inference systems. Running a 70B parameter model on 4 AMD MI455X GPUs instead of 8 Nvidia competitors is not just a cost saving — it is a latency improvement, an energy efficiency gain, and a rack density advantage that compounds across thousands of inference requests per second at production scale. Samsung's HBM4 at 13 Gbps and 3.3 TB/s bandwidth is mass production ready — a critical de-risking of the supply chain that removes one of the most frequently cited bear arguments against AMD's roadmap. Supply chain execution risk has been a legitimate concern for AMD in the past, and the Samsung MOU directly addresses it by establishing an aligned primary supplier relationship before the MI455X launch rather than scrambling for allocation after demand materializes.

AMD Stock (NASDAQ: AMD) at 6.9x Forward EV/Sales vs Nvidia at 11.2x and Broadcom at 14.5x

The Valuation Table That Makes the Investment Case Self-Evident

The relative valuation comparison across the semiconductor AI infrastructure peer group is perhaps the clearest single-page argument for AMD at $202.62. AMD trades at approximately 6.9x forward EV/Sales. Nvidia (NVDA) trades at approximately 11.2x. Broadcom (AVGO) trades at approximately 14.5x. A 6.9x EV/Sales multiple against a company growing data center revenues at the rate AMD is growing them — with EPYC instances up 50%+ in 2025 and MI450 RackScale revenue beginning in Q3 2026 — is a multiple that implies the market either does not believe the growth is real or does not believe the margins will follow. The forward P/E of approximately 30x and EV/EBITDA of 28.6x suggest something more nuanced: the market believes in the growth but has not yet priced in margin expansion. Compared to Nvidia and Broadcom on EV/EBITDA, AMD is actually trading ahead — which means growth is being credited but the revenue multiple discount persists because the market is waiting for proof that AMD can convert AI infrastructure revenue into the margin structure of a true platform company rather than a component supplier. That proof is exactly what Helios and Venice are designed to deliver. When full RackScale systems are sold — where AMD captures GPU, CPU, memory architecture, and system integration margin simultaneously — the revenue per engagement increases dramatically while the hardware cost base does not scale proportionally. That is the margin expansion story the market is waiting to see confirmed in actual reported results, and the guidance that the "vast majority" of 2026 MI450 revenue comes from RackScale solutions is the earliest forward indicator that the confirmation is coming.

The CPU Business Nobody Is Talking About: EPYC Venice and 1,600+ Cloud Instances

The semiconductor investment community has become so fixated on the GPU race that AMD's CPU business — which is both larger and more mature than the GPU segment — is being analyzed as an afterthought. This is a material analytical error. EPYC Venice, the 6th generation server CPU platform, has generated what Lisa Su described as "extremely high customer pull" with large-scale engagement already happening across cloud providers. EPYC cloud instances reached approximately 1,600 deployed configurations at the end of 2025, having grown more than 50% during the year. These are not pilot deployments or test clusters — they are production infrastructure decisions by cloud providers who chose AMD's x86 architecture over Intel alternatives based on performance, power efficiency, and TCO. In the inference-dominated AI workloads of 2026, the CPU's role is not diminishing — it is expanding. Agentic AI frameworks require CPU-driven orchestration layers that manage task decomposition, context retrieval, tool calling, and output synthesis. Each of those functions runs on CPU compute. An enterprise deploying 100 Instinct MI450X GPUs for inference also needs substantial EPYC server capacity to run the orchestration layer that makes those GPUs productive. AMD sells both sides of that equation. Nvidia, despite the Vera CPU introduction, is asking customers to abandon x86 and adopt a proprietary ARM implementation that requires significant software migration investment. AMD asks nothing — existing x86 code runs natively on EPYC without modification. In a world where engineering resources are finite and migration risk is real, that compatibility advantage is worth billions of dollars in customer preference that does not show up in any benchmark comparison.

The Bear Case: CUDA Moat, Execution Risk, and Why 2026 Is a Proof-or-Bust Year

If Helios and Venice Miss, the Entire Thesis Is at Risk — Here Is the Real Downside

Intellectual honesty requires addressing the bear case directly, because AMD at $202.62 is not without risk. The most legitimate bear argument is not about technology — it's about execution. The MI450 and Helios system launches in Q3-Q4 2026 are the single most important commercial deliverables in AMD's history. If customer ramps for full RackScale solutions disappoint — if hyperscalers order standalone accelerators rather than the complete Helios systems that carry higher ASPs and better margins — the earnings growth story gets pushed out by multiple quarters and the 30x forward P/E multiple becomes indefensible. China remains a wildcard that cuts both ways. The $100 million Q1 2026 China guidance is manageable, but any escalation in export control enforcement could reduce that to zero with minimal notice, creating a revenue gap that AMD cannot easily fill in the near term. Nvidia's CUDA ecosystem remains the path of least resistance for most enterprise AI deployments. The switching cost is not just technical — it is organizational. Developer teams trained on CUDA, infrastructure teams familiar with NIM containerization, software libraries built on cuDNN — these are real friction points that make enterprise IT departments hesitant to migrate even when AMD's hardware economics are superior. The warrant structure from the OpenAI deal, while strategically sound, creates dilution for existing shareholders that has been a source of selling pressure and justified skepticism about AMD's need to incentivize adoption. Future deals without warrants — as management has signaled — will eliminate this concern, but existing dilution is already baked in. The AMD stock profile and insider transaction history should be monitored closely as RackScale ramp guidance approaches Q3 — insider buying ahead of the MI450 launch would be a significant additional signal of management confidence, while selling would warrant reassessment of the thesis timeline.

The Buy Decision: Strong Buy at $202.62 With a 12-Month Target of $280-$350 — Here Is the Complete Case

AMD at $202.62 is a strong buy. The three-part convergence — private credit tightening accelerating the inference compute pivot, Samsung HBM4 de-risking the supply chain for MI455X, and Nvidia's vertical integration strategy creating hyperscaler demand for an open alternative — is not a speculative thesis. Each element is confirmed and quantified. Private credit default rates exceeding 9% and CoreWeave's stalled expansion are documented facts. The Samsung MOU signed March 18 is a public announcement. Nvidia's Vera CPU and full-stack ambitions were presented at GTC and are on record. The valuation at 6.9x forward EV/Sales versus Nvidia at 11.2x and Broadcom at 14.5x prices in the skepticism without pricing in the execution. The forward PEG of 0.70 against a sector median of 1.27 implies greater than 81% upside to the sector median — a target of approximately $350 if the market simply stops discounting AMD's growth credibility at a 44% haircut to peers. A more conservative re-rating to a 15x EV/Sales multiple — still below Broadcom and well below Nvidia — would imply a price closer to $280. Both scenarios represent substantial upside from $202.62. The risk-reward is asymmetric in the bull's favor: the downside if Helios disappoints is a multiple compression toward 5x EV/Sales that implies roughly $140-$160, while the upside if Helios and Venice execute to guidance is a re-rating toward $300-$350 that compresses the timeline for the market to recognize what AMD is becoming. The 40%+ EPS CAGR, the 50%+ EPYC growth in 2025, the 8 of 10 top hyperscalers running Instinct in production, the 1,600+ EPYC cloud instances, and the $9.8 billion Q1 revenue guide all confirm the growth is real. The market's 0.70 PEG says it doesn't believe in the durability. The Samsung partnership, the RackScale pivot, and the OpenAI-Meta reference customer foundation argue the market is wrong. Buy AMD at $202.62 with a 12-18 month target range of $280-$350 and a stop loss on a weekly close below $180.